Once Again, The Doorknob

On Affordance, Forgiveness and Ambiguity in Human Computer and Human Robot Interaction

Keynote at Rethinking Affordance Symposium

Akademie Schloss Solitude, 8 June 20181

I think it is absolutely wonderful that there is an event about affordance and an idea that this concept could be rethought. I guess you invited me to talk as an artist who is critically reflecting on the medium she is working with. Indeed as a net artist I do my best to show the properties of the medium, and as a web archivist and Digital Folklore researcher I examine the way users deal with the world they’re thrown into by developers. I suggest we talk about these aspects later, during the Q and A session because it would be good to start in the more applied context of HCI and interface design, since this is where the term lives now and where it is discussed and interpreted. These interpretations affect crucial matters.

The following might sound like an introduction or a lengthy side note, but in fact it is what I really want to tell you today. Interface design is a very powerful profession and occupation, a field where a lot of decisions are made, gently and silently. Not always with bad intentions, very often without any intention at all. But decisions are made, metaphors chosen, idioms learned, affordances introduced—and the fact that they were just somebody’s impulsive picks doesn’t make them less important.

To say that design of user interfaces influences our daily life is a commonplace and an understatement. User interfaces influence people’s understanding of processes, form relations with the companies that provide services. Interfaces define roles computer users get to play in computer culture.

I teach students who, if they don’t change their mind, will become interface designers (or “front end developers,” or “UX designers,” there are many different terms and each of them could be a subject of investigation.) I strongly believe that interface designers should not start to study by trying to make their first prototype of something that looks the same or better or different from what already exists; they shouldn’t learn functions and tricks in Sketch, master drop shadows and rounded corners. I know, that’s easy to state, but what is the alternative? It would be strange to expect or demand that they study philosophy, cybernetics, Marxism, dramaturgy and arts (though all these would be very desirable)—and only afterwards make their first button or gesture.

The compromise I found is introducing them to key texts that reveal what power designers of user interfaces have and that there is no objective reality or reasoning, no nature of things, no laws, no commandments; only decisions that were and will be made consciously or unconsciously.

“It is important for designers and builders of computer applications to understand the history of transparency, so that they can understand that they have a choice.”2

This quote is from the very beginning of the book Windows and Mirrors, written by Jay Bolter and Diana Gromala 15 years ago. Unfortunately the book—relatively well-known in new media theory since one of the authors coined the term Remediation3—is largely ignored in interface design circles. Unfortunately because it questions mainstream practices based on the postulate that the best interface is intuitive, transparent, or actually no interface.

The book very much corresponds to the conference call you published,4 because it is almost exclusively artists who chose reflectivity over transparency, and these are artists who are re-thinking, re-imagining, and some times manage to intervene and correct the course of events.

Ten years ago I invited my former student and artist Johannes Osterhoff to teach the basics (in our common understanding of what basics are) of interface design. You may know his witty year-long performances Google,5 iPhone live,6 Dear Jeff Bezos,7 and other works that reflect on algorithmic and interactive regimes. For his artistic practice, Johannes calls himself “interface artist,” a quite unique self-identification.

He named his course after the book Windows and Mirrors and guided students to create projects that were all about looking at interfaces, reflecting upon metaphors, idioms, and affordances.

Soon after, Johannes took the position of Senior UX Designer at SAP, one of the world’s biggest enterprise software corporations (and it is also not a side note, I will come back to this fact later.) So I took over the course from him a few years ago.

Where do I start with interface design in 2018?

I begin with an essay published in 1991 in Brenda Laurel’s The Art of Human Computer Interaction,8 a book that I rediscover and rediscover for myself year after year. It contains articles by practitioners who now, almost three decades later, either have turned into pop stars—heroes of the electronic age—, people who were forgotten, or recently rediscovered. In 1990, five years after “the rest of us” had their first experience with graphical user interfaces, they convened to analyze what went wrong and what could be done about these mistakes.

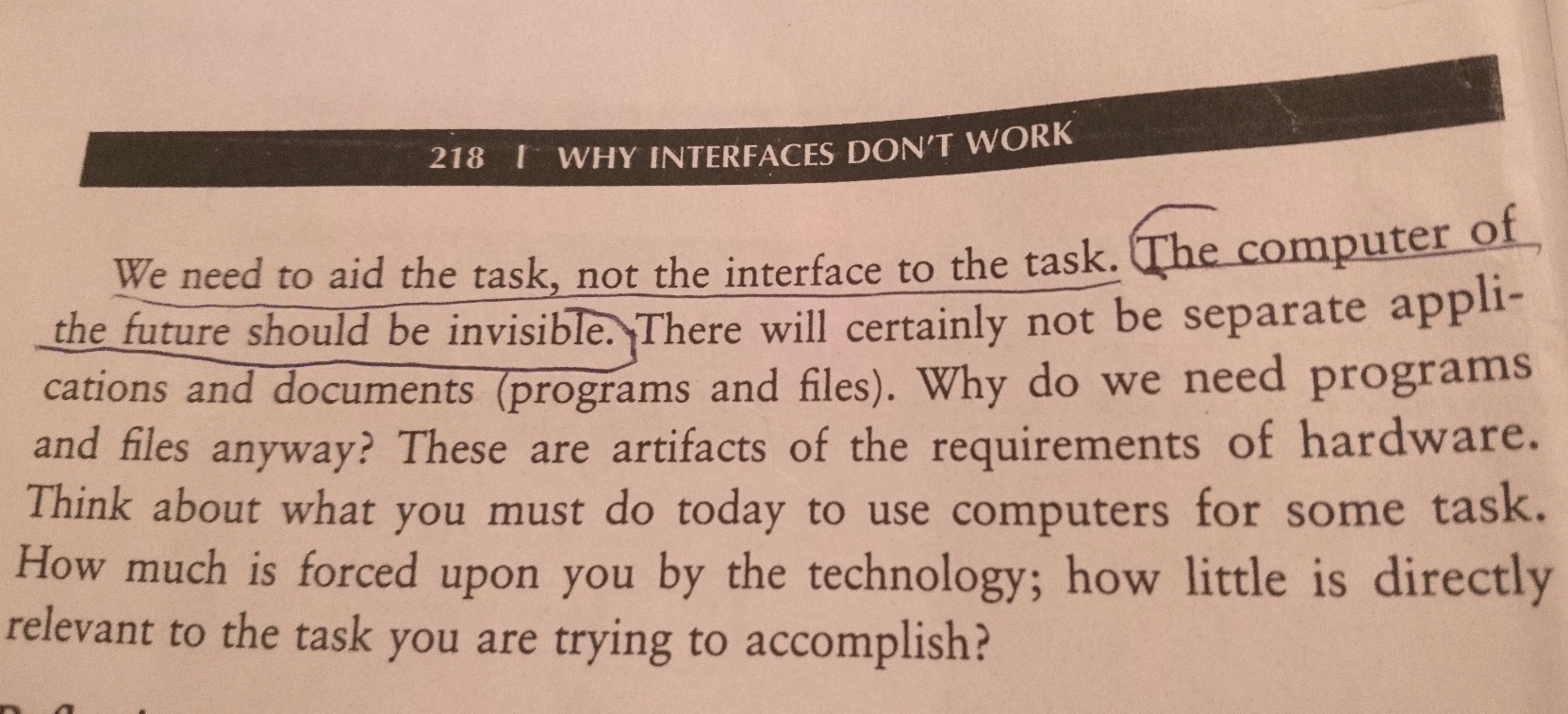

The text I ask students to read is Why Interfaces Don’t Work by Don Norman. It contains statements quoted and referenced by already several generations of interface designers:

- The problem with the interface is that there is an interface.9

- What are computer for? The user, that’s why – making life easier for the user.10

- Make the task dominate, make the tools invisible.11

- The computer of the future should be invisible.12

transcription

We need to aid the task, not the interface to the task. The computer of the future should be invisible. There will certainly not be separate applications and documents (programs and files). Why do we need programs and files anyway? These art artifacts of the requirements of hardware. Think about what you must do today to use computers for some task. How much is forced upon you by the technology; how little is directly relevant to the task you are trying to accomplish?

Curiously, these particular points were not typographically emphasized by the author himself, but anyway became a manifesto and mainstream paradigm for thinking about computers.

In Why Interfaces Don’t Work, sentence after sentence, metaphor after metaphor, Norman claims that users of computers are interested in whatever but not the computers themselves; they want to spend the least time possible with a computer. As a theoretician and more important as a practitioner at Apple, Norman was indeed pushing the development of invisible or transparent interfaces. This is how the word “transparent” started to mean “invisible” or “simple” in interface design circles.

Sherry Turkle sums up this swift development in the 2004 introduction to her 1984 book The Second Self:

“In only a few years the ‘Macintosh meaning’ of the word Transparency had become a new lingua franca. By the mid-1990s, when people said that something was transparent, they meant that they could immediately make it work, not that they knew how it worked.”13

The idea that the users shouldn’t even notice that there is an interface was widely and totally accepted and seen as a blessing. Jef Raskin, initiator of the Macintosh project and author of many thoughtful and otherwise highly recommended texts writes in the very beginning of The Humane Interface:

“Users do not care what is inside the box, as long as the box does what they need done. […] What users want is convenience and results.”14

Period. No manuals or papers that would contradict. Though in practice we could see alternatives: works of media artists, discussed in the aforementioned Windows and Mirrors, and of course the web of the 90’s.

The best counter example to users not wanting to think about interfaces is early web design where people were constantly busy with envisioning and developing interfaces.

Sorry, I can’t stop myself from showing some examples from my One Terabyte of Kilobyte Age archive to you. I hope you can sense the the people who created these pages developed against the invisibility and transparency of interfaces.

I have many more. But back to Norman: to support his intention of removing the interface from even the peripheral view of the user he quotes himself from Psychology Of Everyday Things15 and lifts the doorknob metaphor from industrial design to the world of HCI.

“A door has an interface – the doorknob and other hardware – but we should not have to think of ourselves using the interface to the door: we simply think about ourselves as going through the door or closing or opening the door.”16

I really don’t know any mantra that has been quoted more often in interface design circles.

You can ask, if I am obviously sarcastic and disagreeing with any of the points Norman makes, why do I ask students to read exactly this text? The reason is the sentence right after the previous quote:

“The computer really is special: it is not just another mechanical device.”17

No one ever wants to refer to this moment of weakness; already in the next phrase Norman says that the metaphor applies anyway and the computer’s purpose is to simplify lives.

But this “not just another mechanical device” is the most important thing I like to make students aware of: the complexity and beauty of general purpose computers. Their purpose is not to simplify life. It is maybe a side effect sometimes. The purpose was or could have been man computer symbiosis, “the question is not what is the answer the question is what is the question”18 Licklider quoted french philosopher Anry Puancare when he wrote his programmatic Man Computer Symbiosis, meaning that computers as colleagues should be a part of formulating questions.

The purpose could be Bootstraping as in Engelbart19 or, as Vilém Flusser formulated 1991 in Digitaler Schein20—the same year as the Norman’s text was published!—: “Verwirklichen von Möglichkeiten,”21 realizing opportunities. All this is quite different from “making life easier.”

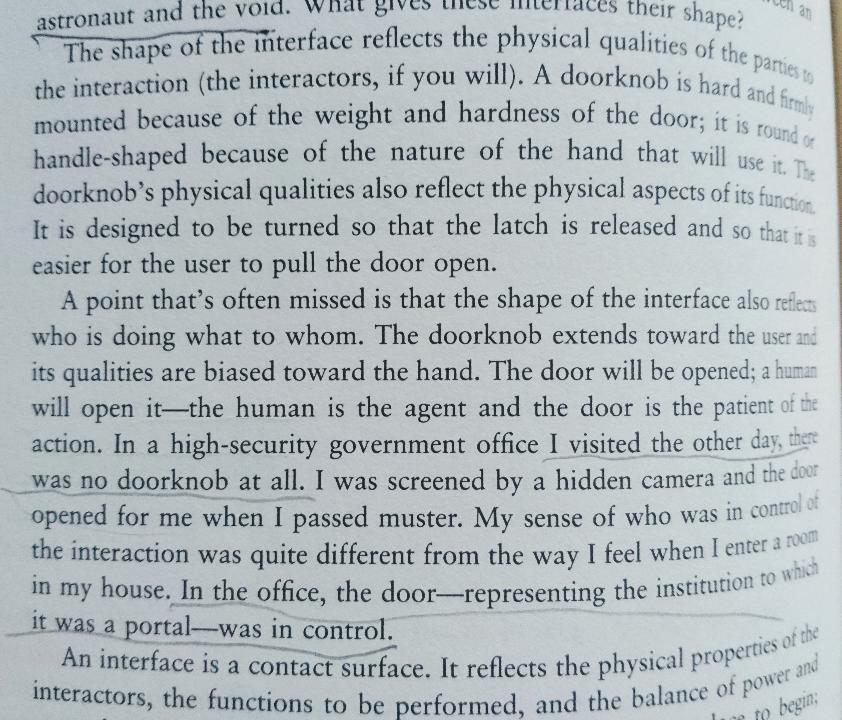

One can sense that Norman’s colleagues and contemporaries were not that excited about the doorknob metaphor. In a short introductory article “What is Interface,” Brenda Laurel diplomatically notices that in fact door knobs and doors are beaming complexity, control and power, “who is doing what to whom.”22

transcription

The shape of the interface reflects the physical qualities of the parties to the interaction (the interactors, if you will). A doorknob is hard and firmly mounted because of the weight and the hardness of the door; it is round or handle-shaped because of the nature of the hand that will use it. The doorknob’s physical qualities also reflect physical aspects of its function. It is designed to be turned so that the latch is released and so that it is easier for the user to pull the door open.

A point that’s often missed is that the shape of the interface also reflects who is doing what to whom. The doorknob extends toward the user and its qualities are biased towards the hand. The doof will be opened; a human will open it—the human is the agent and the door is the patient of the action. In a high-security government office I visited the other day, there was no doorknob at all. I was screened by a hidden camera and the door opened for me when I passed muster. My sense of who was in control of the interaction was quite different from the way I feel when I enter a room in my house. In the office, the door—representing the institution to which it was a porton—was in control.

In 1992 French philosopher Bruno Latour, who according to his reference list was acquainted with Norman’s writings, published Where Are the Missing Masses? The Sociology of a few mundane artifacts.23 The text contains the mind blowing section “Description of the door” that canonizes the door as a “miracle of technology” which “maintains the wall hole in a reversible state.” Word by word his investigation of a note pinned onto a door—“The groom is on Strike, For God’s Sake, Keep the door Closed”—and with elaboration on every mechanical detail—knobs, hinges, grooms—he dismounts Norman’s intention to perceive the doorknob as something simple, obvious, and intuitive.

***

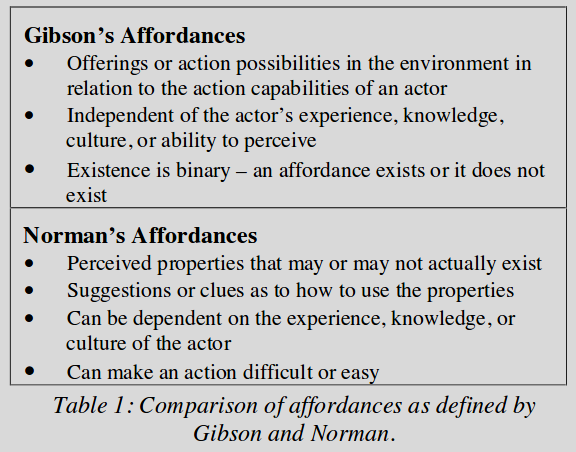

Why Interfaces Don’t Work is not mentioning the word affordance, but the door knob is a symbol of it, accompanying the term from one design manual to another. And more importantly it was again Don Norman who among other things—or should I say first and foremost—adapted and reinterpreted the term Affordance, originally coined by ecological psychologist Gibson, for the world of Human Computer Interaction.

A very good basic summary on the topic was written by Viktor Kapelinin with Article on Affordances in the 2nd edition of Encyclopedia of HCI, a highly recommended resource.

“Affordance is […] considered a fundamental concept in HCI research and described as a basic design principle in HCI and interaction design.”24

Affordance as in Norman, not in Gibson.

transcription

Gibson’s Affordances

- Offerings or action possibilities in the environment in relation to the action capabilities of an actor

- Independent of the actor’s experience, knowledge, culture or ability to perceive

- Existence is binary—an affordance exists or it does not exists

Norman’s Affordances

- Perceived properties that may or may not actually exist

- Suggestions or clues as to how to use the properties

- Can be deoendent on the experience, knowledge, or culture of the actor

- Can make an action difficult or easy

The difference is properly explained in a widely quoted table from Affordances: Clarifying and Evolving a Concept by Joanna McGrenere and Wayne Ho written in 2000.25 The authors summarize the shift:

“Norman [...] is specifically interested in manipulating or designing the environment” so that utility can be perceived easily."

…or vice versa…

“Unlike Norman’s inclusion of an object’s perceived properties, or rather, the information that specifies how the object can be used, a Gibsonian affordance is independent of the actor’s ability to perceive it.”26

As we know, Don Norman later admitted27 to misinterpreting the term, corrected it to “perceived affordances,” and excused for starting the mess and devaluation of the term.28

“Far too often I hear graphic designers claim that they have added an affordance to the screen design when they have done nothing of the sort. Usually they mean that some graphical depiction suggests to the user that a certain action is possible. This is not affordance, either real or perceived. Honest, it isn’t. It is a symbolic communication, one that works only if it follows a convention understood by the user.”29

Almost 20 years later, as the community has grown, claims become even more ridiculous, with the word affordance being used by UX designers in all possible meanings, as a synonym for whatever.

When I started to work on this lecture Medium.com, which always knows what I am interested in at the moment, delivered to me a fresh 11 minutes read on uxplanet.org: How to use affordances in UX.30 Already the title indicates confusion, but not to the author who obviously thinks that affordance is an element of an app and it can be used as a synonym for Menu, Button, Illustration, Logo, or Photo. The article references a three years old text31 laying out six rather absurd types of affordances: explicit, hidden, pattern, metaphorical, false, and negative.

This terminological mess is nothing new for the design discipline; also the word affordance and its usage are not the biggest deal. There are other terms at stake and their usage is more troubling such as “transparency” or “experience.” Maybe this affordance clownery could be ignored or could be even seen positively as a commendable attempt to bring sense into a world of clicking, swiping and drag-and-dropping; a good intention to contextualize them to interpret them through psychology and philosophy.

But I’d also like to mention that this urge to talk about and define affordances is not so innocent, with affordance being a corner stone of the HCI paradigm User Centered Design—which was coined32and conceptualized by (again!) Don Norman in the mid-1980’s—as well as the User Experience bubble that (again!!) Don Norman started.33 Both blew up 1993 when he became head of research at Apple. User Experience or UX swallowed other possible ways to see what an interface is and how it could be.

In my essay Rich User Experience, UX and Desktopization of War34 I wrote about the danger of scripting and orchestrating user experiences, in Turing Complete User35 I mention that it is very difficult to criticize the concept, because it has developed a strong aura of doing the right thing, of “seeing more,” “seeing beyond,” etc.

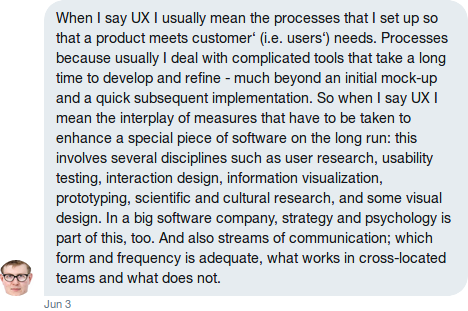

I asked aforementioned Johannes Osterhoff about his interpretation of UX. Quoting from his direct message:36

transcription

“When I say UX I usually mean the processes that I set up so that a product meets customer’s (i.e. users’) needs. Processes because usually I deal with complicated tools that take a long time to develop and refine—much beyond an initial mock-up and and quick subsequent implementation. […] I mean the interplay of measures that have to be taken to enhance special piece of software [in] the long run: this involves several disciplines such as user research, usability testing, interaction design, information visualization, prototyping, scientific and cultural research, and some visual design. In a big software company, strategy and psychology [are] part of this, too. And also streams of communication; which form and frequency is adequate, what works in cross-located teams and what does not.”

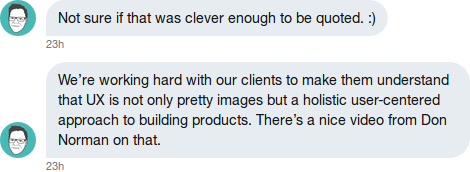

Another former student, Florian Dusch, principal of the software design and research company “zigzag” in Stuttgart, when answering my question also refers to UX as “many things,” “holistic,” and “not only pretty images.”37

transcription

Not sure if that was clever enough to be quoted. :)

We're working hard with out clients to make them understand that UX is not only pretty images, but a holistic user-centered approach to building products. There’s a nice video from Don Norman on that.

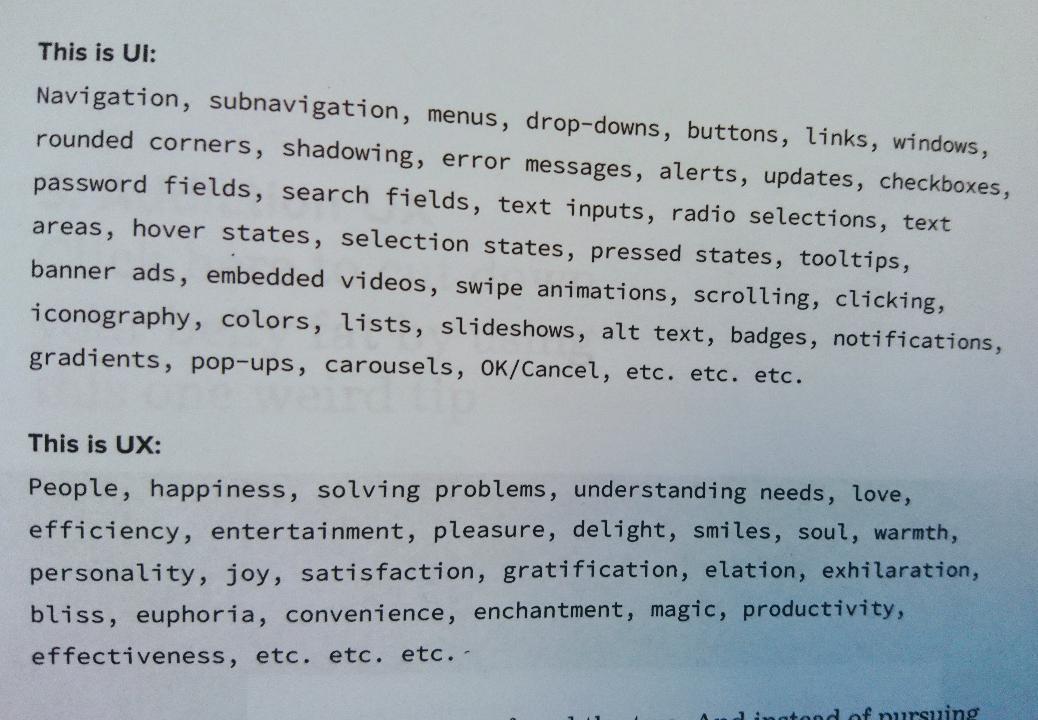

The next quote is from The best Interface is no interface,38 a very expressive book brought to the world in 2015 by Golden Krishna who “currently works at Google on design strategy to shape the future of Android:”39

transcription

This is UI:

Navigation, subnavigation, menus, drop-downs, buttons, links, windows, rounded corners, shadowing, error messages, alerts, updates, checkboxes, password fields, search fields, text inputs, radio selections, text areas, hover states, selection states, pressed states, tooltips, banner ads, embedded videos, swipe animations, scrolling, clicking, iconography, colors, lists, slideshows, alt text, badges, notifications, gradients, pop-ups, carousels, OK/Cancel, etc. etc. etc.This is UX:

People, happiness, solving problems, understanding needs, love, efficiency, entertainment, pleasure, delight, smiles, soul, warmth, personality, joy, satisfaction, gratification, elation, exhilaration, bliss, euphoria, convenience, enchantment, magic, productivity, effectiveness, etc. etc. etc.

The German academic Marc Hassenzahl also delivers a wonderful definition of UX by introducing himself on his website:

“He is interested in designing meaningful moments through interactive technologies – in short: Experience Design.”40

Already from this small selection of quotes by people who are in the business for a long time and know what they do, you can sense that UX is big, big and good, bigger and better than... small-minded and petty things.

The paradox is that technically, when it comes to practice, products of User Experience Design are contradicting its image and aura. UX is about nailing things down, it has no place for ambiguity or open-ended processes.

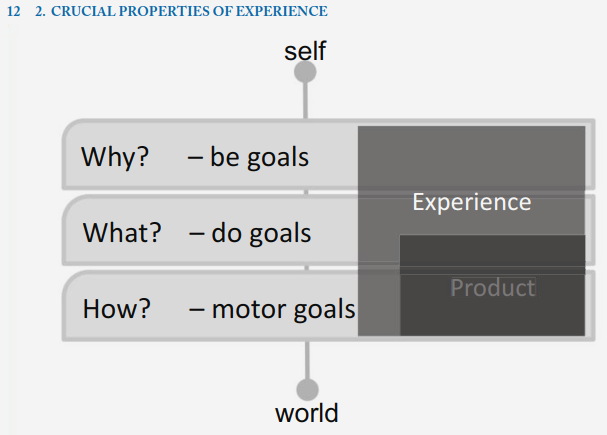

Marc Hassenzahl is contributing to the scene not only by poetic statements and interviews. In fact in his 2010 book Experience Design: Technology for all the right reasons he proclaims “the algorithm for providing the experience”41 in which the “why” is a crucial component, a hallmark that justifies UX’s distinguished position.

In a series of video interviews42 Hassenzahl recorded with the Interaction Design Foundation he states that people don’t just want to make a phone call, there are different reasons behind each of them: business, goodnight kiss, checking if a kid is at home, ordering food. And all those “whys” need their own design on both the software and the hardware level. Again, an ideal UX phone is a different phone for each need or at least a different app for different type of calls.

The Why of UX is not a philosophical, but pragmatic question, that could be substituted with “what exactly?” and “who exactly?”

User Experience Design is a successful attempt to overcome the historic accident Don Norman makes responsible for difficult-to-use interfaces of the late 1980’s:

“We have adapted a general purpose technology to very specialized tasks while still using general tools.”43

Here is a fresh insight from the studio “UX Collective” on how to train your UX skills:

“It’s a good idea to limit yourself by imposing some assumptions, constraints, and a platform (mobile / desktop / tablet etc). If working in pairs, one person could pick a problem, and the partner could refine it. So choose one of the following, decide on a mobile or desktop solution, and then keep asking questions.”44

The list has 100 suggestions, here are a few:

transcription

- Create an alarm clock.

- Create an internal tool that allows a major TV network to tag and organize their content.

- Create a time tracker.

- Create a chat-bot for financial decisions.

- Create a music player.

- Create a smart mirror.

- Prompt the user to engage in a daily act of kindness.

- Track your health with some kind of wearable tech.

- Locate your locked bike and be informed if it moves.

- Prevent your parked car from being stolen while you go on holiday.

- Build a smart fridge.

“We can design in affordances of experiences”45 said Norman in 2014. What a poetic expression if you forget that “affordance” in HCI means immediate unambiguous clue, and “experience” is an interface scripted for a very particular narrow scenario.

There are many such examples of tightly scoped scenarios around. To name one that gets public attention right at the moment—early May 2018 in the middle of the Cambridge Analytica scandal—, Facebook announces an app for long-term relationships:46 Real long-term relationships—not just “hook-ups” to quote Mark Zuckerberg. If you are familiar my position on general purpose computers and general purpose users, you know that I believe there should be no dating apps at all; not because I am against dating, but because I think that people can date using general purpose software, they can date in email, in chats, you can date in Excel and Etherpad. But if the free market demands a dating software it should be made without asking “why?” or “what exactly?”, “hook-up or long term relationship?”, etc.

Please allow me again to show a screenshot or two of old web pages. I have a before_ category in the One Terabyte of Kilobyte Age archive which I assign to pages which authors created with a certain purpose in mind which nowadays are taken over by industrialized, centralized tools and platforms. The first category is before_flickr, the next before_googlemaps. The last one reminds me of ratemyprofessors.com, so I tagged it before_ratemyprofessor. These pages are dead and none of them became successful, but they are examples of users finding their ways to do what they desire in an environment that is not exclusively designed for their goals: this is what I would call a true user experience. It is totally against the ideology of UX.

***

So, apart from contradicting Don Norman’s call and saying that Computers of the Future should be visible, I’d like to suggest to finally disconnect the term affordance from Norman’s interpretation, to disconnect affordance from experience, from the ability to perceive (as in Gibson), and from experience design needs; to see affordances as options for possibilities of action, and to insist on the General Purpose Computer’s affordance to become anything if you are given the option to program it; to perceive opportunities and risks of a world that is not restrained to mechanical age laws and artifacts.

In the chapter on affordance, the authors of the influencial interaction design manual About Face—which for many years was subtitled as “the essentials of interaction design,” in the latest edition changed to “classic of creating delightful user experiences”—observe:

“A knob can open a door because it is connected to a latch. However in a digital world, an object does what it does because a developer imbued it with the power to do something […] On a computer screen though, we can see a raised three dimensional rectangle that clearly wants to be pushed like a button, but this doesn’t necessarily mean that it should be pushed. It could literally do almost anything.”47

Throughout the chapter, designers are advised to resist this opportunity and to be consistent and follow conventions. Because indeed everything is possible in the world of zeroes and ones they introduce the notion of a “contract:”

“When we render a button on the screen we are making a contract with the user […]”48

If there is a button on screen it should be pressed, not dragged-and-dropped, and should respond accordingly. And they are absolutely right… but only when the interface is limited to knobs and buttons.

When Bruno Latour wanted his readers to think about a world without doors he wrote:

“[…] imagine people destroying walls and rebuilding them every time they wish to enter or leave the building… or the work that would have to be done to keep inside or outside all the things and people that left to themselves would go the wrong way.”49

A beautiful thought experiment and indeed unimaginable—however, not in a computer generated world where we don’t need doors really. You can go through walls, you can have no walls at all, you can introduce rules that would make walls obsolete. These rules and contracts—not behaviors of knobs—are the future of user interfaces, so we have to be very thoughtful about the education of interface designers.

***

There are two more concepts I promised in the title but didn’t say a word about yet: Forgiveness and Human Robot Interaction (HRI).

My questions are: How does the preoccupation with strong clues and strictly bound experiences—affordance and UX—affect the beautiful concept of “forgiveness” that theoretically would have to be a part of every interactive system? And how do concepts of transparency, affordance, form follows function, form follows emotion,50 user experience, and forgiveness refract in HRI?

I’ll start with forgiveness. The following is a quote from Apples’s 2006 Human Interface Guidelines, which I think gives a very good idea of what exactly is meant by forgiveness when it comes to user interfaces.51

transcription

Forgiveness

Encourage people to explore your application by building in forgiveness—that is, making most actions easily reversible. People need to feel that they can try things without damaging the systems or jeopardizing their data. Create safety nets, such das Undo and Revert to Saved commands, so that people will feel comfrotable learning and using your product.

Warn users when they initiate a task that will cause irreversible loss of data. If alerts appear frequently, however, it may mean that the product has some design flaws. When options are presented clearly and feedback is timely, using an application should be relatively error-free.

Anticipae common problems and alert users to potential side effects. Provide extensive feedback and communication at every stage so users feel that they have enough information to make the right choices. For an overview of different types of feedback you can provide, see “Feedback and Communication” (page 42).

Its essence is making actions reversible, offering users stable perceptual cues for a sense of “home,” and always allowing “Undo.”

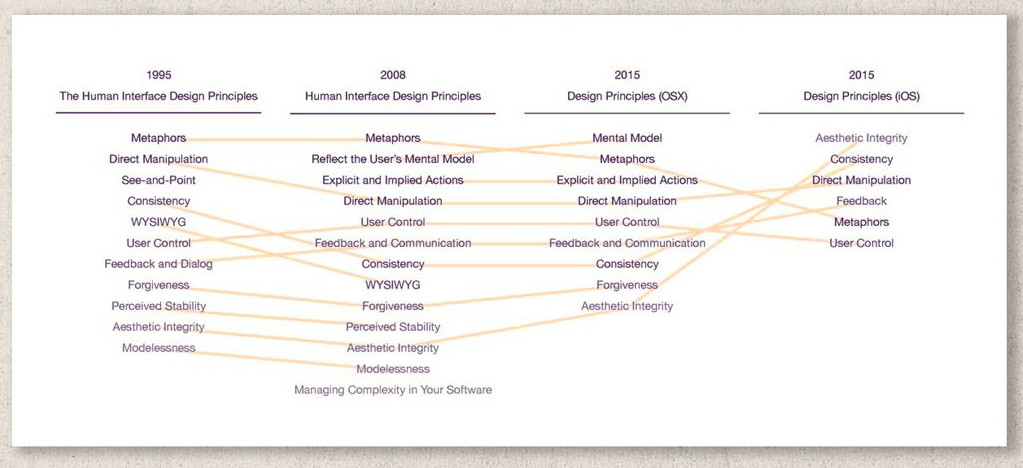

In 2015 Bruce Tognazinni and Don Norman noticed that forgiveness as a principle vanished from Apple’s guidelines for iOS and wrote the angry article How Apple Is Giving Design A Bad Name.52 Bruce Tognazinni himself has authored eight editions of Apple’s Human Interface Design Guidelines, starting 1978,53 and is known for conceptualizing interface design in the context of illusion and stage magic.

Diagram tracing the changes in core principles of Apple’s guidelines over time, by Michael Meyer.

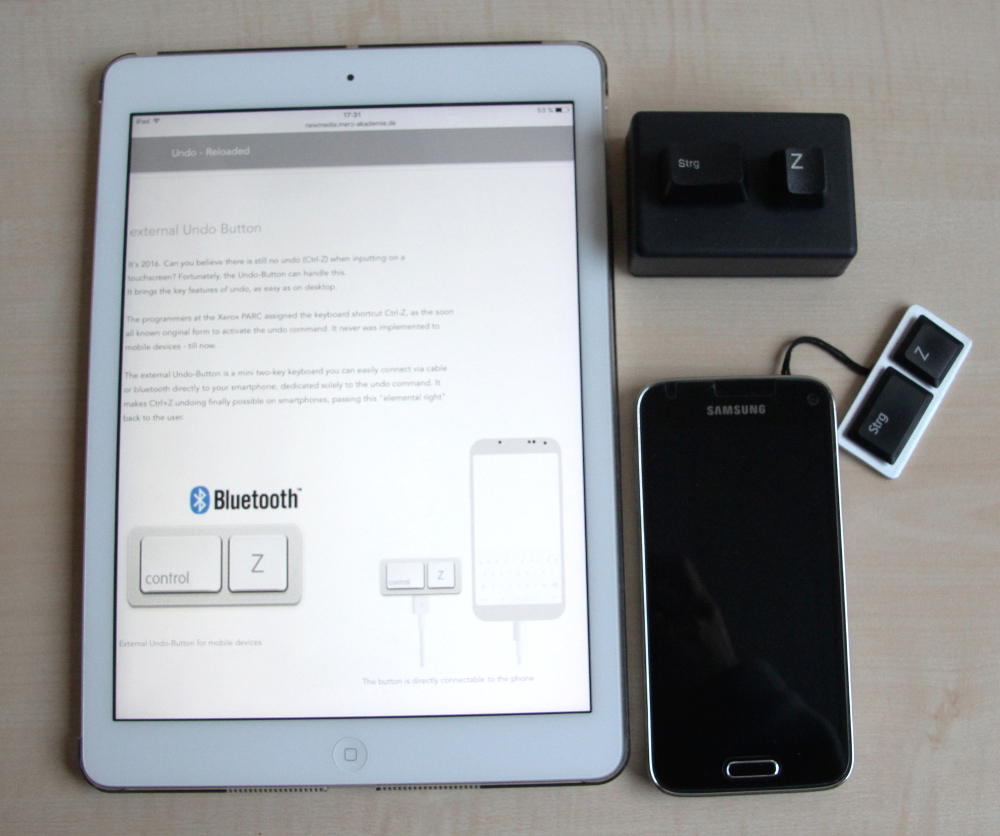

Users of both Apple, Android, and all other mobile phones without keyboards noticed the disappearance of forgiveness even earlier, because there was no equivalent to ⌘-Z or Ctrl-Z on their devices. They noticed but didn’t protest.

Metez, Teja. “External Undo Button.” Undo - Reloaded, 2015.

In my view of the world Undo should be a constitutional right. It is the top demand on my project User Rights.54 In addition to the many things I said in support of Undo elsewhere, in the context of this talk I’d like to emphasize that all the hype around affordances and UX developed in parallel with the disappearance of Undo, it is not a coincidence. Single-purpose applications with one button per screen would guide through life without a need for Undo.

Though what users really need from operating system vendors is a global Undo function. It could have been the only contract, it could be a world where further discussions about affordances would be obsolete.

***

Being part of New Media dynamics the field of HCI is very vibrant and very “pluralistic.” Tasks for interface designers are to be found far beyond the screens of personal computers and submit buttons. There are new challenges like Virtual Reality and Augmented Reality, Conversation and Voice User Interfaces, even Brain Computer Interaction. All these fields are not new by themselves, they are contemporaries of GUI, and by calling them new I rather mean “trending right now” or “trending right now again” in HCI papers and in mass media.

The last few years were all about artificial intelligence, neural networks and anthropomorphic robots, in movies, literature, and consumer products. I adjusted my curriculum as well and introduced rewriting an ELIZA55 script to my interface design course, so that students prepare themselves for designing interfaces that talk to the users and pretend that they understand them. I personally have a bot,56 and this talk will be fed to its algorithm and will become a part of the bot’s performance. Some more years and this bot might be injected into a manufactured body looking something like me and will go to give lectures in my place.

Watching films and TV series where robots are main protagonists, following Sophia’s57 adventures in the news, regular people dive into issues that were exotic only some time ago: the difference in between symbolic and strong AI, ethics of robotics, trans-humanism.

The omnipresence of robots, even if just mediated, provoke delusions:

“We expect our intelligent machines to love us, to be unselfish. By the same measure we consider their rising against us to be the ultimate treason.”58 (Zarkadakis)

Delusions lead to paradoxes:

“Robots which enchant us into increasingly intense relationships with the inanimate, are here proposed as a cure for our too-intense immersion in digital connectivity.Robots, the Japanese hope, will pull us back toward the physical real an thus each other.”59 (Turkle)

Paradoxes lead to more questions:

“Do we really want to be in the business of manufacturing friends that will never be friends?”60 (Turkle)

Should Robot’s have rights? Should robots and bots be required to reveal themselves as what they are?

The last question suddenly entered the discourse after Google’s recent demo of Duplex,61 causing internet users to debate if Google’s assistant should be allowed to say “hmmm,” “oh,” “errr,” or to use interjections at all.

ITU Pictures. Sofia, First Robot Citizen at the AI for Good Global Summit 2018. AI for Good Global Summit 2018. May 15, 2018. Photo.

Without even noticing, we, the general public, are discussing not only ethical but interface design questions and decisions. And I wish or hope it will stay like this for some time.

“Why Is Sophia’s (Robot) Head Transparent?”62 users ask the internet another design question: Is it just to look like Ex Machina, or is it for better maintenance? Or maybe it marks a comeback of transparency in the initial, pre-Macintosh meaning of the word?

Curiously, when scientists and interaction designers talk about transparency at the moment, they oscillate in between meaning exposing and explaining algorithms and the simplicity of the communication with a robot:

Designing and implementing transparency for real time inspection of autonomous robots63

Robot Transparency: Improving Understanding of Intelligent Behaviour for Designers and Users64

Improving robot transparency: real-time visualisation of robot AI substantially improves understanding in naive observers65

The researcher Joanna J. Bryson—co-author the aforementioned papers—has a very clear position on ethics. “Should Robots have rights?” is not a question for her. Instead she asks why to design machines that raise such questions in the first place.66

However, there are enough studies proving that humanoids (anthropomorphic robots) that perform morality are the right approach for situations where robots work with and not instead of people: the social robot scenario, where “social robot is a metaphor that allows human like communication patterns between humans and machines.”67 This is quoted from Frank Hegel’s article Social Robots: Interface Design between Man and Machine, a text that truly impressed me some time ago, though it doesn’t announce anything revolutionary; on the opposite, it states quite obvious things like “human-likeness in robots correlates highly with anthropomorphism”68 or “aesthetically pleasing robots are thought to posses more social capabilities […]”69

Very calmly, almost in between the lines, Hegel introduces the principle for a proper fair robot design: the “fulfilling anthropomorphic form,”70 which should immediately lead humans to understand a robot’s purpose and capabilities. Affordance for a new age.

Robots are here, they are not industrial machines, but social, or even “lovable,” their main purpose is not to replace people, but to be among people. They are anthropomorphic, they look more and more realistic. They have eyes... but not because they need them to see. Their eyes are there to inform us that seeing is one of the robot’s functions. If a robot has a nose it is to inform the user that it can detect gas and pollution, if it has arms it can carry heavy stuff; if it has hands it is to grab smaller things, if these hands have fingers, you expect it can play a musical instrument. Robots’ eyes beam usability, their bodies express affordances. Faces literally become an interface.

Back to Norman’s wisdom:

“Affordances provide strong clues to the operations of things. Plates are for pushing. Knobs are for turning. Slots are for inserting things into. Balls are for throwing or bouncing. When affordances are taken advantage of, the user knows what to do just by looking: no picture, label, or instruction needed.”71

Manual affordances (“strong clues”) are easy to comprehend and accept when they are part of a GUI: they are graphically represented and located, somewhere... on screen. Things got more complex for designers and users when we moved to so called “post GUI,” to gestures in virtual, augmented, and invisible space. Yet this cannot be compared with the astonishing level of complexity when our thoughts move from Human Computer Interaction to Human Robot Interaction.

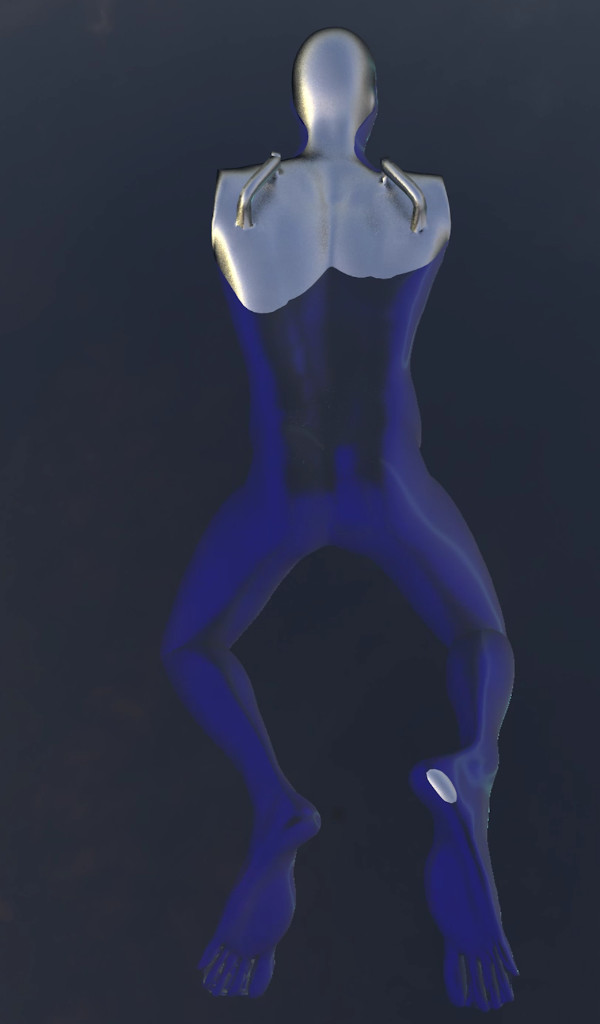

Andreas Eisenhut, Concept for Swimming Lifesaver Robot, Video Still, June 2018.

The image above is from a selection of students sketches, I asked them to embrace the principle of the fulfilling anthropomorphic form and take it to the limit. What could be an anthropomorphic design if everything that doesn’t signal a function is removed? Like if the robot can’t smell there just no nose. And what for to have two hands if you only need one? What could this un-ambiguity mean for interaction and product design?

And tonight’s final question: How is the HCI principle of forgiveness appearing in HRI? In contrast to the current situation in graphical and touch-based user interfaces, forgiveness is doing very well in the realms of robots and AI.

It is built in: “[t]he external observer of an intelligent system can’t be separated from the system.”72 Robot companions are here “[n]ot because we have built robots worthy of our company but because we are ready for theirs” and “[t]he robots are shaping us as well, teaching us how to behave so they can flourish.”73 These quotes from Turkle and Zarkadakis remind us of Licklider’s man-computer-symbiosis, Engelbart’s concept of bootstrapping, and other advanced projections for the coexistence of man and computer, just this time it is about man and robot, not man and computer-on-the-table situations.

Forgiveness is built-in, but in HRI it is built into the human part. It is all on our side.

We are witnessing how the most valuable concept of HCI—Undo—meets a fundamental principle of Symbolic AI—scripting the human interactor.74 I’m curious to see what affordances will further emerge. And who will undo whom when Symbolic AI is replaced by a “Strong” or “Real” AI as they say now.

***

Olia Lialina, June 2018

References

See: “Symposium,” Rethinking Affordance, accessed August 15, 2018.↩

David J Bolter and Diane Gromala, Windows And Mirrors: Interaction Design, Digital Art, and the Myth of Transparency (MIT Press, 2003), 35.↩

David J Bolter and Richard Grusin, Remediation: Understanding New Media (MIT, 2000).↩

“Rethinking Affordance,” Rethinking Affordance, accessed August 3, 2018.↩

Johannes Osterhoff, iPhone live, 2012, Online Performance.↩

Johannes Osterhoff, Dear Jeff Bezos, January 12, 2013, Online Performance.↩

Brenda Laurel, ed., The Art of Human-Computer Interface Design, 1st ed. (Addison Wesley, 1990).↩

Donald Norman, “Why Interfaces Don’t Work,” in The Art of Human-Computer Interface Design, ed. Brenda Laurel (Addison-Wesley, 1990), 210.↩

Norman, 217.↩

Norman, 217.↩

Norman, 218.↩

Sherry Turkle, The Second Self: Computers and the Human Spirit (MIT Press, 2004), 7.↩

Jef Raskin, The Humane Interface. New Directions for Designing Interactive Systems. (Reading, Mass: Pearson Education, 2000), 8.↩

Donald A. Norman, Psychology Of Everyday Things (New York: Basic Books, 1988).↩

Norman, “Why Interfaces Don’t Work,” 218.↩

Norman, 218.↩

Joseph C. R. Licklider, “Man-Computer Symbiosis,” in The New Media Reader, ed. Noah Wardrip-Fruin and Nick Montfort (The MIT Press, 2003), 75.↩

Thierry Bardini, Bootstrapping: Douglas Engelbart, Coevolution, and the Origins of Personal Computing, 1st ed. (Stanford University Press, 2000), 24. “Engelbart took what he called ‘a bootstrapping approach,’ considered as an iterative and coadaptive learning experience.”↩

Vilém Flusser, Medienkultur, 5th ed. (Frankfurt am Main: FISCHER Taschenbuch, 1997).↩

Flusser, 213.↩

Brenda Laurel, The Art of Human-Computer Interface Design, 01 ed. (Reading, Mass: Addison Wesley Pub Co Inc, 1990), xii.↩

Bruno Latour, “Where Are the Missing Masses?,” in Shaping Technology / Building Society: Studies in Sociotechnical Change, ed. Wiebe E. Bijker et al., Reissue edition (Cambridge, Mass.: The MIT Press, 1994), 225–59.↩

Victor Kaptelinin, “Affordances,” in The Encyclopedia of Human-Computer Interaction, 2nd ed. (Interaction Design Foundation), accessed July 28, 2018.↩

Joanna McGrenere and Wayne Ho, “Affordances: Clarifying and Evolving a Concept,” 2000, 8.↩

McGrenere and Ho, 3.↩

Don Norman, “Affordances and Design,” Don Norman: Designing For People, accessed July 28, 2018.↩

That should remind us of another term that existed in HCI since 1970, at least at XEROX Park lab: User Illusion, which at the end of the day is the same principle, and also a foundation of interfaces as we know them. “At PARC we coined the phrase user illusion to describe what we were about when designing user interfaces.” See: Alan Kay, “User Interface: A Personal View,” in The Art of Human-Computer Interface Design, ed. Brenda Laurel (Reading, MA: Addison-Wesley, 1990), 191–207.↩

Don Norman, “Affordance, Conventions and Design (Part 2),” Don Norman: Designing For People, accessed August 20, 2018.↩

Tubik Studio, “UX Design Glossary: How to Use Affordances in User Interfaces,” UX Planet, May 8, 2018.↩

Paula Borowska, “6 Types of Digital Affordance That Impact Your UX,” Webdesigner Depot (blog), April 7, 2015.↩

“User-Centered Design,” Wikipedia, July 24, 2018.↩

Peter Merholz, “Peter in Conversation with Don Norman About UX & Innovation,” Adaptive Path, accessed July 29, 2018.↩

Olia Lialina, “Rich User Experience, UX and Desktopization of War,” January 2015.↩

Olia Lialina, “Turing Complete User,” October 2012.↩

Johannes Osterhoff to Olia Lialina, June 3, 2018.↩

Florian Dusch to Olia Lialina, June 2, 2018.↩

Golden Krishna, The Best Interface Is No Interface: The Simple Path to Brilliant Technology, 1 edition (Berkeley, California: New Riders, 2015), 47.↩

Golden Krischna, “Golden Krishna,” August 8, 2016.↩

Marc Hassenzahl, “Experience Design,” Experience Design, accessed July 30, 2018.↩

Marc Hassenzahl and John Carroll, Experience Design: Technology for All the Right Reasons, New (San Rafael, Calif.: Morgan and Claypool Publishers, 2010), 12.↩

Marc Hassenzahl, “User Experience and Experience Design,” in User Experience and Experience Design (Interaction Design Foundation), accessed July 28, 2018.↩

Norman, “Why Interfaces Don’t Work,” 218.↩

Jon Crabb, “100 Example UX Problems,” UX Collective, April 17, 2018.↩

Don Norman, “Commentary by Donald A. Norman,” in The Encyclopedia of Human-Computer Interaction, 2nd ed. (Interaction Design Foundation), accessed July 28, 2018.↩

Sam Machkovech, “Mark Zuckerberg Announces Facebook Dating,” Ars Technica, May 1, 2018.↩

Alan Cooper, Robert Reimann, and David Cronin, About Face 3: The Essentials of Interaction Design, 3rd edition (Indianapolis, IN: Wiley, 2007), 284.↩

Cooper, Reimann, and Cronin, 285.↩

Freeman J. Dyson et al., Technology and Society: Building Our Sociotechnical Future, ed. Deborah G. Johnson et al. (Cambridge, Mass: The MIT Press, 2008), 154.↩

Form Follows emotion is a credo of German industrial designer Hartmut Esslinger, which became a slogan for “frog” the company he founded in 1969. See: “Frog Design. About Us.,” accessed August 18, 2018; “FORM FOLLOWS EMOTION,” Forbes.com, accessed August 18, 2018.↩

Apple Human Interface Guidlines (Apple Computer, Inc, 2006), 45.↩

Bruce Tognazzini and Don Norman, “How Apple Is Giving Design A Bad Name,” Fast Company, November 10, 2015.↩

See: Bruce Tognazzini, “About Tog,” AskTog (blog), November 17, 2012.↩

Olia Lialina, User Rights, Website, October 2013.↩

N. Landsteiner, “Eliza (Elizabot.Js),” mass:werk, 2005.↩

Olia Lialina, “GIFmodel_ebooks,” Twitter Bot, 2015.↩

Ean Schuessler, “Sophia,” Hanson Robotics (blog), accessed July 28, 2018.↩

George Zarkadakis, In Our Own Image: Savior or Destroyer? The History and Future of Artificial Intelligence, 1 edition (Pegasus Books, 2017), 51.↩

Sherry Turkle, Alone Together: Why We Expect More from Technology and Less from Each Other (New York, NY: Basic Books, 2012), 147.↩

Turkle, 101.↩

Jeffrey Grubb, Google Duplex: A.I. Assistant Calls Local Businesses To Make Appointments, accessed July 28, 2018.↩

“Why Is Sophia’s (Robot) Head Transparent?”, Quora, 2018.↩

Andreas Theodorou, Robert H. Wortham, and Joanna J. Bryson, “Designing and Implementing Transparency for Real Time Inspection of Autonomous Robots,” Connection Science 29, no. 3 (May 30, 2017): 230–41.↩

Robert H. Wortham, Andreas Theodorou, and Joanna J. Bryson, “Robot Transparency: Improving Understanding of Intelligent Behaviour for Designers and Users,” 2017.↩

Robert H. Wortham, Andreas Theodorou, and Joanna J. Bryson, “Improving Robot Transparency: Real-Time Visualisation of Robot AI Substantially Improves Understanding in Naive Observers,” 2017.↩

See: Theodorou.↩

Frank Hegel, “Social Robots: Interface Design between Man and Machine,” in Interface Critique, ed. Florian Hadler and Joachim Haupt (Berlin: Kulturverlag Kadmos, 2016), 104.↩

Hegel, “Social Robots: Interface Design between Man and Machine,” 111.↩

Hegel, 112.↩

Hegel, “Social Robots: Interface Design between Man and Machine,” 106.↩

Mads Soegaard, Affordances, accessed July 30, 2018.↩

Zarkadakis, In Our Own Image, 71.↩

Sherry Turkle, Alone Together: Why We Expect More from Technology and Less from Each Other (New York, NY: Basic Books, 2012), 55.↩

“A successful chatterbot author must therefore script the interactor as well as the program, must establish a dramatic framework in which the human interactor knows what kinds of things to say […]” Janet H. Murray, Hamlet on the Holodeck: The Future of Narrative in Cyberspace, First Edition edition (New York: Free Press, 1997). 202↩